On Wednesday Ethan Mollick, an associate professor at Wharton and AI researcher, brought out his Otter Test again. An informal way of testing AI image generators, Mollick uses the cute and cuddly animal to test AI models on their ability to accurately create images based on his prompts that include otter in one way or other. After trying it on ChatGPT Images 2.0, which is now available to all ChatGPT users, he wrote, “turns out that there is a quality threshold I didn’t expect.” In other words, Images 2.0 aced his Otter Test.

Mollick’s experience with the new AI image generators is apparently not an aberration. Hours after OpenAI rolled out the new tool, calling it “a state-of-the-art image model that can take on complex visual task”, it is leaving many a jaws on the floor. It is that good. For example, Mollick, who used the tool early as part of beta testers, wrote: “I have been using GPT ImageGen-2 for the past weeks. I didn’t think that better image-generators would be a big deal but it turns out that there is a quality threshold I didn’t expect, where you can now get text, slides, academic papers.”

Apparently, the buzz has something to do with the inherent intelligence in the new ChatGPT image generators. Unlike most other such tools, it can “think”. In this way, it is not just an AI image tool. Instead, its image generating abilities are built on top of general intelligence. OpenAI says this general intelligence, with its knowledge cutoff date of December 2025, makes “Images 2.0 significantly better at following detailed instructions, rendering dense text, placing objects accurately, handling a wider range of aspect ratios, and generating images across more languages.”

It is these abilities that people are finding genuinely impressive. The intelligence also marks a leap in the way the AI tool understands prompts and then creates an output. This is a leap well reflected on Arena AI, a place where AI image generators go to fight each other. In the latest rankings on the website, the GPT Image 2 takes the top spot by a wide margin. “GPT-Image-2 by OpenAI has claimed the No 1 spot across all Image Arena leaderboards,” the website noted. “A clean sweep with a record-breaking 242-point lead in Text-to-Image (is) the largest gap we’ve seen to date.”

Now, we can talk about an image tool with 10,000 words, but as they say, a picture is worth a thousand words. So, let’s move on to some pictures, as we saw them on social media.

One of the most impressive images we have seen the new ChatGPT create is a pile of rice with “wow” written on one grain. Here is the photo:

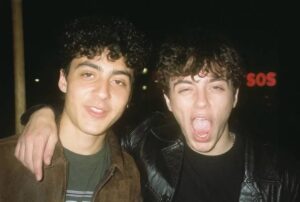

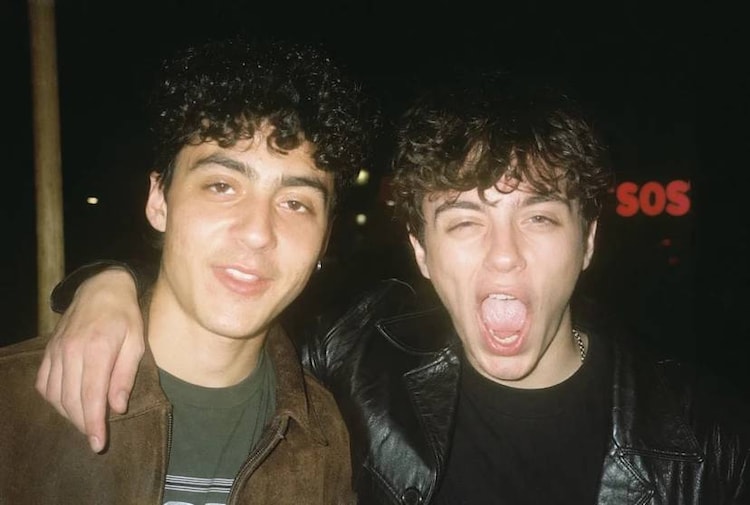

Another image that we have seen is this photo of two men, created using in a style that evokes memories of 80s and 90s. The realism in this photo is off the charts, giving the image a near-pixel perfect look.

Of course, it is not perfect. And even Mollick, as big a fan he seems to be of the new Image 2.0, highlights that. Commenting on the new tool, he wrote: “Though the images are very good, ChatGPT Image 2.0 does have the typical imagegen problem, which is that editing can be stubborn, and attempts to get the AI to change details work well for the first round or two, but then progress slows. Putting the image in a new chat helps.”

Having said that, the leap in AI image generation does seem impressive. People on X are saying that the quality of the new ChatGPT tool is immediately noticeable compared to the previous, or even compared to Google’s Nano Banana that has been a rage until now. From placing and relating objects accurately to rendering dense text, the ChatGPT model appears to deliver visuals that feel far more usable than before. One of the biggest improvements people are pointing out is how well the model handles text inside Images — something that has historically been a weak spot for AI tools until now.