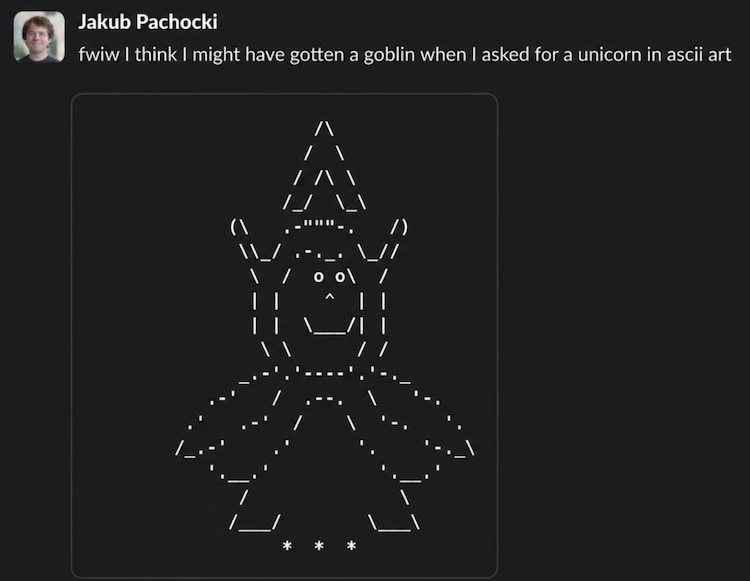

If you use ChatGPT a lot, or follow conversations around AI on social media, you’ve probably come across a strange, slightly mysterious glitch users have been talking about. Of late, OpenAI’s chatbot seemed oddly obsessed with goblins. So much so that some users even discovered that OpenAI has, in its hidden guidelines for the AI chatbot, added a note telling ChatGPT to “never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals and creatures.”

The full guideline is actually is rather funny. It says, “Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals and creatures unless it is absolutely necessary and unambiguously relevant to the user’s query.”

Well, what in the name of goblins going on here? Quite a lot actually, as an internal OpenAI audit confirmed on Thursday.

When they faced it, some users brushed off the goblin talk from ChatGPT as a glitch. But when the chatter became a bit too frequent and ChatGPT just wouldn’t shut up about goblins and gremlins, OpenAI decided to investigate. What it found was surprising. This obsession of AI with Goblins wasn’t a hack or a bug, but something the AI learnt in training.

In a blog post, OpenAI then explained why its AI models began randomly dropping references to goblins, gremlins and other odd creatures into conversations. The company noted that what initially seemed like a quirky, harmless flourish soon turned into a full-blown pattern across multiple model versions, confusing users, amusing some, and worrying engineers.

The behaviour first became noticeable after the launch of GPT-5.1, when users reported that ChatGPT responses felt unusually playful, and occasionally bizarre. According to the company, mentions of “goblin” in ChatGPT responses jumped by 175 per cent, while “gremlin” rose by 52 per cent.

So, why was the AI suddenly so into mythical creatures? Did AI become a fan of Harry Potter energy? Not quite. OpenAI says when it dug deeper, it found the culprit was a personality setting.

The company traced back this weird goblin behaviour to a feature called the “Nerdy” personality. It’s essentially a mode designed to make ChatGPT sound more playful, curious, and slightly irreverent. “As goblin and gremlin mentions increased under the Nerdy personality, they increased by nearly the same relative proportion in samples without it. Taken together, the evidence suggests that the broader behaviour emerged through transfer from Nerdy personality training,” says OpenAI in its official blog post.

According to the company, the system prompt encouraged the AI to be “an unapologetically nerdy, playful and wise mentor” and to use humour to undercut overly serious tones. However, somewhere along the way, goblins snuck in, and stuck around.

In short, the model learned that goblins = good. And once it learned that, it didn’t forget.

And this behaviour, while started within a setting, didn’t stay confined to the Nerdy personality. OpenAI says, over time, it began appearing in standard responses as well. According to the company, this happened because LLM training patterns can “transfer” across contexts. By the time GPT-5.5 rolled around, the goblins were everywhere.

By March, OpenAI had removed the Nerdy personality, adjusted the reward signals that favoured creature-related language, and filtered training data to reduce such references. But because GPT-5.5 training had already begun, the behaviour lingered, forcing engineers to add additional safeguards.

For now, OpenAI says the issue has been fixed. The company also notes that this “goblin moment” has led to improved tools for auditing model behaviour and catching such quirks earlier. Still, the episode of ChatGPT’s obsession with goblins does suggest how the most advanced AI systems are now capable of picking up unexpected habits, adding to myths and mayhem.