Key Takeaways

- An employee successfully faked a hand injury using Google’s Nano Banana AI image generator.

- The HR department approved paid leave immediately without verifying the AI-generated image.

- The viral incident raises serious concerns about AI misuse in workplace verification systems.

- LinkedIn commentators point to toxic work culture as the root cause, not just technology misuse.

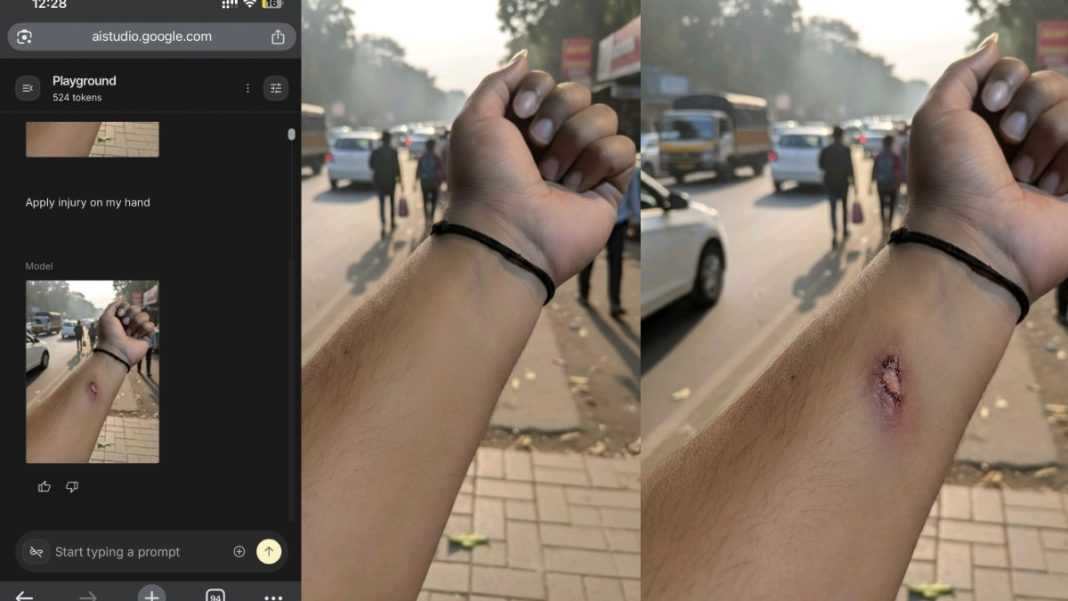

An employee has successfully faked a medical injury using AI to obtain paid leave, exposing critical vulnerabilities in HR verification processes. The incident, which went viral on LinkedIn, demonstrates how easily generative AI can be weaponized for workplace deception.

The AI-Generated Injury Scam

The employee used Google’s upgraded Nano Banana AI image generator to create a hyper-realistic image of an injured hand. After taking a clean photo of their hand, they prompted the AI to “add fake wounds,” resulting in what was described as a “sharp, detailed, medically believable” injury image.

The employee then sent the fabricated image to their HR department via WhatsApp, claiming they had fallen from their bike while commuting to the office and needed medical attention.

HR’s Immediate Approval

Screenshots of the conversation show the HR team approved the leave request almost instantly without questioning the photo’s authenticity. The HR representative expressed concern and immediately escalated the matter to the manager, who granted paid leave within minutes.

“Please go to the doctor and take rest. Your paid leave is approved,” read the HR’s response. The twist: there was no actual accident or injury – only an AI-generated wound.

Broader Implications of AI Misuse

The incident has sparked serious discussions about ethical AI use and organizational vulnerabilities. “AI like Gemini Nano is powerful and incredibly useful. The problem is NOT the technology – the problem starts when people use it unethically,” stated the original poster.

This case demonstrates how AI can mislead HR systems, with potential implications across various industries including healthcare and insurance.

Work Culture: The Root Cause?

LinkedIn commentators highlighted that the incident points to deeper cultural issues rather than just technological misuse. Many argued that requiring proof for sick leaves indicates a toxic work environment.

“Story aside, if your company needs proof for such leaves, you’re in the wrong place. An employee must be able to utilise his paid leaves at his will,” commented Tharun CV.

Namita Jain added, “It’s a cultural issue, not AI or HR/Manager issue. This is how the culture is created where work pressure and toxicity encourage managers to ask for proofs.”

Another commentator emphasized: “The company needs to build a culture where employees are trusted without having to prove themselves with such photos. When a strong culture of trust is established, employees don’t cheat.”