The fight between the US government and Anthropic is no longer a contract negotiation dispute. It is a clash over killer robots and mass surveillance – and over who decides how artificial intelligence is used in war. At the centre is a blunt question: can a private AI company refuse to let its systems be used for fully autonomous weapons and domestic mass surveillance, even if the Pentagon says such uses are lawful?

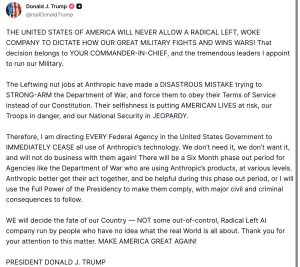

That question has now exploded into public view with both Anthropic and the US government refusing to budge. US President Donald Trump on Friday ordered federal agencies to immediately stop using Anthropic’s AI tools, a day after the tech giant rejected the Pentagon’s demand for “any lawful use” of its models.

The move threatens to choke off a major revenue stream for the San Francisco-based startup and could slow AI-driven work across US defence and intelligence agencies.

Anthropic vs Trump: The breaking point

Anthropic, founded by former OpenAI research head Dario Amodei, has positioned itself as a safety-first AI lab. Its flagship model, Claude, is already deployed across US national security systems, including classified networks.

The company won up to $200 million in Pentagon contracts last year and says its tools are used for intelligence analysis, operational planning and cyber operations.

But Anthropic built in explicit guardrails. It says it will not allow its AI to be used for:

- Mass domestic surveillance of US citizens

- Fully autonomous weapons systems that remove humans from the loop

In a public statement, Amodei said such uses either undermine democratic values or exceed what current AI systems can safely handle.

The Pentagon disagreed.

According to officials, contractors cannot dictate how the military uses tools once they are lawfully acquired. The Department of Defense set a deadline for Anthropic to agree to unconditional use. When the company refused, the standoff escalated.

Defense Secretary Pete Hegseth announced the Pentagon would move to designate Anthropic a “supply chain risk” – a label typically used for foreign adversaries.

A nuclear option

The supply chain designation would effectively blacklist Anthropic from the defense industrial base – tens of thousands of contractors who do business with the Pentagon.

Attorneys speaking to local media described the move as the “contractual equivalent of nuclear war.”

President Trump went further on Truth Social, accusing Anthropic of trying to dictate how the military fights wars. He called the company’s leadership “left wing nut jobs” and said he would use the “Full Power of the Presidency” if necessary to force compliance.

He ordered a six-month phase-out of Anthropic’s technology across all federal agencies.

The rhetoric was not subtle. Nor were the stakes.

What did Pentagon want from Anthropic

At the heart of the dispute is the Pentagon’s insistence that AI vendors agree to “any lawful use” of their systems.

Anthropic sought two explicit exceptions:

- No use for mass domestic surveillance

- No use in fully autonomous lethal weapons

The Pentagon has said it has no interest in those applications. But it refused to accept contractual limits.

That tension also reflects a broader shift – how AI is moving from chatbots and code assistants into battlefield logistics, targeting systems, and intelligence fusion platforms.

Human rights groups have long warned about so-called “killer robots” – weapons that select and engage targets without human oversight. While partially autonomous systems are already used in conflicts such as Ukraine and Gaza, fully autonomous lethal systems remain controversial.

Anthropic’s position is that current frontier AI models are not reliable enough for that role.

The Pentagon’s position is that it, not Silicon Valley, determines acceptable military doctrine.

OpenAI steps in, or not?

Determined to get what they want, the Trump administration has reportedly approached Anthropic’s rival, OpenAI, to fill the gap.

The rivalry between Anthropic and OpenAI dates back to 2021, when Amodei and several senior researchers left OpenAI over what he later described as “directional differences” – particularly around safety and alignment.

Yet, OpenAI CEO Sam Altman reportedly told employees that his company also does not support mass surveillance or autonomous lethal weapons.

In an internal memo, Altman wrote that humans should remain “in the loop” for high-stakes decisions and that OpenAI would seek red lines similar to Anthropic’s.

Row becomes political, ideological

Trump framed the conflict as ideological. In his posts, he accused Anthropic of trying to “STRONG-ARM” the Department of War and claimed its stance endangered American troops.

Democratic Senator Mark Warner criticised the administration’s move, warning that national security decisions should not be driven by political considerations.

Meanwhile, hundreds of employees from OpenAI and Google DeepMind signed an open letter urging AI companies not to be divided by Pentagon pressure.

The letter explicitly referenced concerns about domestic mass surveillance and autonomous killing.

The fight is no longer confined to boardrooms. It is spilling into the broader AI workforce.

What is at stake: Revenue shock, operational risk

For Anthropic, the immediate risk is financial. Government contracts and national security deployments are central to its growth strategy ahead of a potential IPO.

Being labeled a supply chain risk could damage not only federal contracts but also private-sector relationships.

For the government, the costs are different.

Claude is already embedded in intelligence and defense workflows. A forced phase-out could disrupt planning systems, analysis pipelines and cybersecurity operations.

Replacing a frontier AI model is not like swapping office software. It involves retraining systems, migrating data, and revalidating security clearances.

The six-month timeline may prove ambitious.

Elon Musk in the wings?

There is also a possibility that Elon Musk could step in with Grok, the AI model developed by his company xAI.

Musk has positioned himself as both a critic of “woke AI” and an advocate for aggressive AI development in national security contexts.

If Grok were to secure federal contracts, the AI race would take on yet another political dimension.

Government vs Silicon Valley: A familiar fault line

This is not the first time Silicon Valley and the Pentagon have clashed. In 2018, Google employees protested the company’s involvement in a Pentagon drone analysis project. The company eventually withdrew from that contract.

Since then, major tech firms have re-engaged with defense work. Amazon, Microsoft and others now compete actively for national security contracts.

What has changed is the power of the technology itself.

Frontier AI systems can synthesise intelligence, generate operational plans, and assist in cyber operations at speeds that compress decision-making timelines.

The idea of “killer robots” no longer sounds like science fiction. Nor does the prospect of AI-enabled mass surveillance that can stitch together location data, browsing history and public records into a near-total portrait of a person’s life.

Anthropic argues that the law has not caught up with those capabilities.

The Pentagon argues that it operates within existing law – and that national security cannot hinge on private companies’ ethical preferences.

What comes next?

Anthropic could challenge the designation in court. Legal experts say the “supply chain risk” label, typically reserved for foreign adversaries, may face scrutiny if applied to a US company.

But litigation would take time.

In the meantime, the US government appears to be consolidating partnerships with AI firms willing to accept broader deployment terms.

The broader question remains unresolved.

As AI systems grow more capable, the distance between lab research and battlefield deployment shrinks. The debate over mass surveillance and fully autonomous weapons will not disappear with one contract.

For now, the message from Washington is clear: in matters of war, the state decides.

Anthropic’s reply is equally clear: not every lawful use is a safe one.