Google has unveiled a new technique that could dramatically reduce the amount of memory required to run artificial intelligence (AI) models. The breakthrough, called TurboQuant, was announced by Alphabet’s Google this week, and may be a solution for ‘memory shortage’ problem globally. According to the company, the new technique can shrink the memory needed to run large language models that power chatbots like Google’s own Gemini by up to six times, while also making them up to eight times faster. Interestingly, Google claims all of this comes with zero loss in accuracy.

“Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency,” Google Research said in a post on X (formerly Twitter).

What is TurboQuant and how it solves ‘memory problem’

When users have a long conversation with an AI chatbot, the AI needs to remember everything that came before. It stores all of that context in what is known as a key-value cache which grows larger the longer the conversation goes on. This leads to use of enormous amount of RAM, and it fills up fast.

On everyday devices like phones and laptops, this creates real limitations: the AI can only handle short conversations before it slows down or runs out of memory entirely.

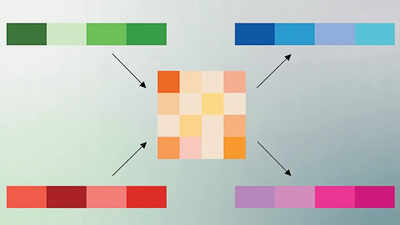

As per Google, TurboQuant is like a compression tool that shrinks the data to one-sixth of its original size.

“Techniques like TurboQuant are critical for this mission. They allow for building and querying large vector indices with minimal memory, near-zero preprocessing time, and state-of-the-art accuracy. This makes semantic search at Google’s scale faster and more efficient. As AI becomes more integrated into all products, from LLMs to semantic search, this work in fundamental vector quantization will be more critical than ever,” Google said.

If TurboQuant delivers on its promise, the practical implications are significant, specifically for data centres that require huge number of High-Bandwidth Memory (HBM) – that have negatively impacted the overall supply of consumer RAMs. Moreover, phones, laptops and cheaper computers could run more powerful AI tools without needing high-end hardware. Responses would feel faster, and the cost of running AI applications would fall.