If there’s one company that has truly ridden the AI wave to the top, it is Nvidia. Today, it sits at the centre of this technological revolution, with its chips powering everything from chatbots to massive data centres. If a company needs to train new AI system, they need Nvidia chips. If they need to serve AI models to consumers, they need Nvidia chips. Sort of.

But how did Nvidia get here? Nvidia CEO Jensen Huang offers a story. Before 2016 Nvidia looked very different. It was well known, certainly, but largely within the gaming community. However, Nvidia had bigger plans for the future, and the story really started in a tiny room.

Speaking on a podcast with Joe Rogan, CEO Jensen Huang revealed that the turning point for the company came when it began betting heavily on deep learning technologies, long before the rest of the industry caught on. “We were convinced that we ought to put everything into this and help create this industry because we’re going to solve a whole bunch of interesting problems. And that was in 2012,” he said.

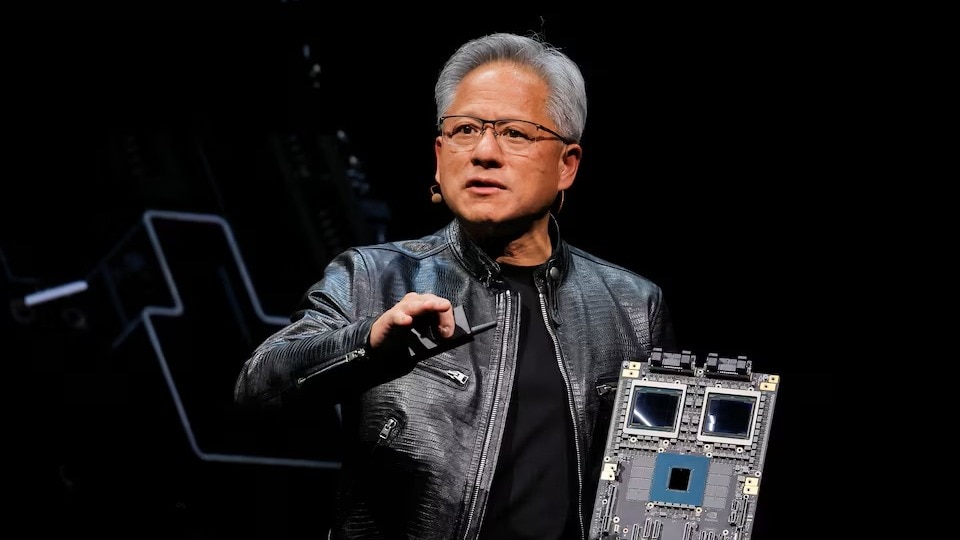

Huang went on to explain that Nvidia built its first true AI machine around this idea, the DGX-1. It was designed specifically for deep learning and came with a hefty price tag of around $300,000. “The DGX-1 was $300,000. It cost Nvidia a few billion dollars to make the first one. And instead of two chips SLI, we connected eight chips with a technology called NVLink — it’s basically SLI supercharged,” Huang revealed.

SLI, as gamers would recall, was a technology that allowed computer gamers to use two graphics cards simultaneously by connecting using a ribbon cable.

While Nvidia’s machine was powerful and ahead of its time, there was just one problem. Nobody wanted it. Huang said that when Nvidia unveiled the DGX-1, there were no buyers. Most companies didn’t understand what it was, let alone why they needed it.

“When I announced this thing, nobody in the world wanted it. I had no purchase orders — not one. Nobody wanted to buy it. Nobody wanted to be part of it the audience was completely silent. They had no idea what I was talking about,” he said.

The first customer

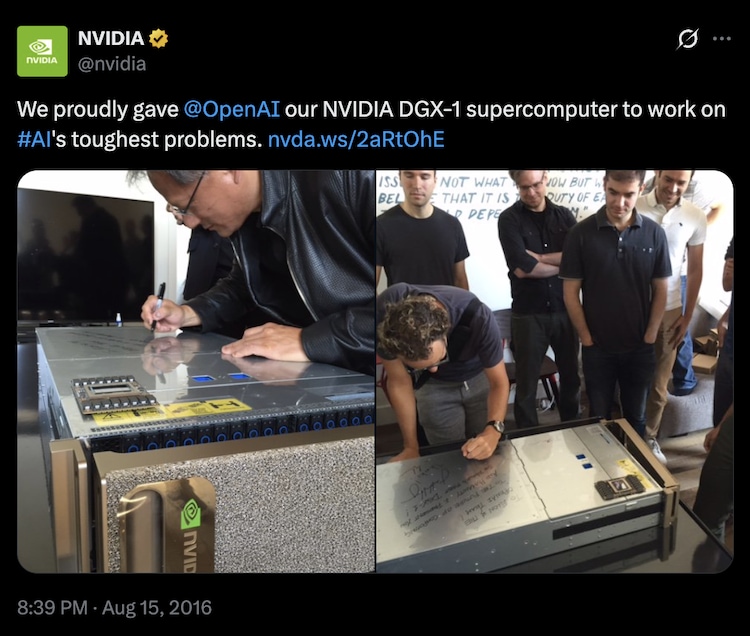

However, everything changed when Elon Musk showed interest. Huang recalled that Musk reached out and said he had a company that could use such a machine. There was just one catch, it was a non-profit. That company would later become OpenAI.

Despite the high cost and uncertainty, Nvidia decided to take a leap of faith. In 2016, Huang personally delivered a DGX-1 to Musk in San Francisco. However, he was in for a surprise. The customer for this advanced supercomputer wasn’t a cutting-edge lab, but a small group of researchers working out of a tiny room. Among them was Ilya Sutskever, who would later play a crucial role in shaping modern AI.

“I walked up to the second floor where they were all kind of in a room smaller than your place here. And that place turned out to have been OpenAI 2016 just a bunch of people sitting in a room,” Huang shared.

However, fast forward a decade, and that single machine in that cramped room has changed everything. It laid the groundwork for ChatGPT and pushed generative AI into the mainstream.

Today, Nvidia isn’t just a chipmaker. It is the owner of the engine behind every major LLM on the planet. Well, at least almost all AI models that are not in China or have not come from Google, which uses its own Tensor Processing Units (TPUs). And as AI has exploded, it has also made Nvidia the biggest company in the world in terms of market capitalisation.