The clash between Anthropic and the US government has now reached the courts. The Claude AI maker had been in negotiations with the Pentagon for weeks about the use of its AI in classified settings, but the talks finally broke down late last month when Anthropic said the US Department of Defence (DoD) refused to accept its two red lines.

Top updates in the US vs Anthropic lawsuit:

1) After the US government decided to label it a ‘supply chain risk’, Anthropic filed dual lawsuits against the DoD and the broader administration. The lawsuit claims that the Trump administration’s decision to place the AI startup on the blacklist is an attempt to punish it for its AI guardrails.

“The federal government retaliated against a leading frontier AI developer for adhering to its protected viewpoint on a subject of great public significance — AI safety and the limitations of its own AI model — in violation of the Constitution and laws of the United States,” Anthropic said in its lawsuit.

2) The lawsuits were filed after Defence Secretary Pete Hegseth formally labelled Anthropic a supply chain risk last week.

3) Anthropic told a judge on Tuesday that it could lose billions of dollars in revenue this year due to the Trump administration’s decision to label it as a supply chain risk.

4) Appearing before US District Judge Rita F. Lin at a San Francisco hearing, Anthropic’s attorney argued that the federal government’s actions have led to over 100 enterprise customers contacting the company to express concerns about continuing their contracts.

5) Anthropic’s lawyer also claimed that the US government has been reaching out to its customers to pressure them to stop working with the company.

“And this is all the predictable result of the defendant’s actions and the uncertainty they’ve created, as well as the fact that defendants have been affirmatively reaching out to our customers and pressuring them to stop working with Anthropic and switch to other AI companies,” the company said in court.

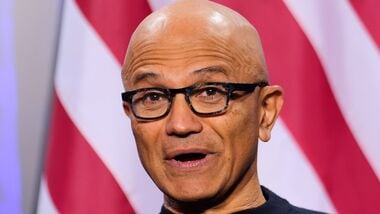

6) Microsoft showed its support for Anthropic in court. The company, in a recent filing, warned that the Pentagon’s blacklisting of Anthropic will have “negative ramifications for the entire technology sector and American business community.”

“This is not the time to put at risk the very AI ecosystem that the Administration has helped to champion,” a lawyer for the company said in court.

7) Just a day earlier, 37 OpenAI and Google employees had also filed an amicus brief in court in support of Anthropic.

“The government’s designation of Anthropic as a supply chain risk was an improper and arbitrary use of power that has serious ramifications for our industry,” the brief read.

8) Sam Altman had also earlier opposed Anthropic’s designation as a supply chain risk. He also said that OpenAI’s own deal with the Pentagon was intended to diffuse tensions.

9) Emil Michael, the under secretary of defence for research and engineering, has said in an interview with Bloomberg that he sees little chance of resuming negotiations with Anthropic over the use of its AI tools for classified military work.

10) Claude is currently the only AI model used by the US in classified military use cases. The AI was reportedly used by the US in both the capture of Venezuelan president Nicolás Maduro and the recent strikes on Iran.