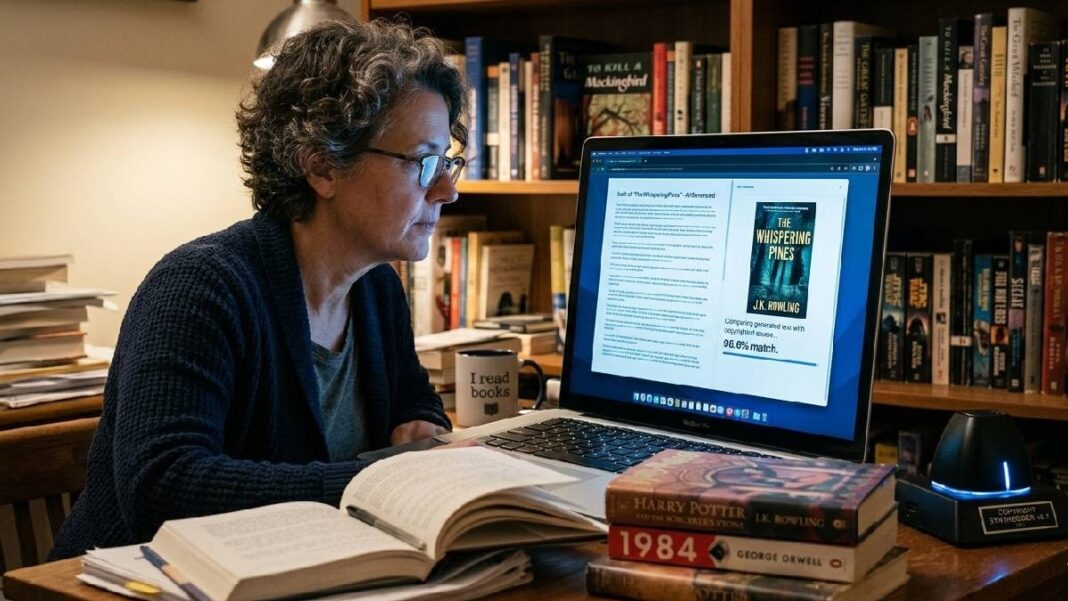

Several authors around the world have accused AI companies of using their books to train AI models. Some of these cases have also reached courts, where companies have argued in their defence that their models do not keep exact copies of copyrighted books used during training and cannot reproduce them. However, these companies may soon face fresh legal trouble as new research suggests otherwise.

Researchers at Cornell University claim that fine-tuning AI models, which means training an already trained model, can reconstruct up to 85–90 percent of copyrighted books. The researchers also said that models were able to reproduce books they were not trained on during the fine-tuning stage.

AI companies have added extra safeguards to prevent their models from reproducing exact text from copyrighted books, articles, or other protected content. However, the researchers claim that fine-tuning can change how these models behave and allow them to expand short plot summaries into full text stories.

The researchers conducted experiments on models including GPT-4o, Gemini 2.5 Pro, and DeepSeek-V3.1. After additional training, they found that these models could expand plot summaries to reconstruct up to 85–90 percent of copyrighted books, even if those books were not used during the fine-tuning stage.

Recall extended beyond the trained author

Researchers also found that the fine-tuned models were not limited to the author whose works were used during training. They said the models were fine-tuned only on novels by Haruki Murakami, but the models could recall verbatim passages from books written by more than 30 other authors.

The researchers also trained models using random author pairs and public-domain text. They found that the models could still reproduce text from copyrighted books at similar levels. However, when the models were trained on synthetic text that was not from real books, they did not reproduce copyrighted passages.

Study raises broader industry concerns

Researchers concluded that when models are fine-tuned on real author texts, it can reactivate memorised material from earlier training. They described this as a security and industry-wide problem because multiple models showed the same behaviour.

The findings have also raised questions around Fair use rulings, where courts have assumed that safeguards prevent AI systems from reproducing protected content and have allowed AI companies to use copyrighted material.