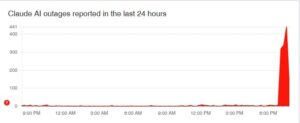

Claude outage: Several reports have started to reported issues with Claude on Downdetector. At the time of this writing, there are over 4,000 reports, which is significantly high for such a brief period.

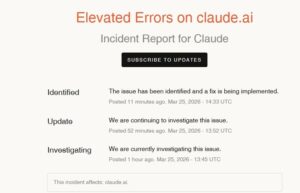

Claude has recognized the outage on its status page, noting a rise in errors. Problems were most reported on Claude Chat (48%), app (28%) and Claude Code (14%).

“The issue has been identified and a fix is being implemented,” it said.

Meanwhile, several users reported about Claude outage on X. “Error Alert on Claude: “This isn’t working right now. You can try again later.” It worked on the phone app,” one user wrote.

“its down for me,” another said.

“I cannot use it “This isn’t working right now. You can try again later” Last 15 minutes or so,” a third person wrote.

Anthropic’s new feature for AI assistant Claude

Anthropic has unveiled a new feature for its AI assistant Claude, enabling it to perform coding tasks with greater autonomy, while striving to uphold a layer of safety checks.

This feature, referred to as “auto mode,” is presently accessible as a research preview and aims to minimize the necessity for developers to manually authorize each action performed by AI systems. Rather, the model assesses whether a task is safe to carry out independently, seeking to achieve a balance between efficiency and supervision.

Auto mode functions by evaluating each action prior to execution, searching for potential hazards such as unintended behavior or prompt injection attacks—where concealed instructions can coerce the AI into executing actions that exceed the user’s intent. Actions considered safe are executed automatically, whereas those identified as risky are prevented from being carried out.

The recent update enhances previous tools developed by Anthropic, such as Claude Code Review and Dispatch, which are designed to automate development workflows. Nevertheless, the auto mode advances this concept by transferring the decision-making process from the user to the AI regarding when approvals are required.

This shift signifies a broader trend within the AI landscape, where organizations like OpenAI and GitHub are creating systems that can perform tasks independently. As these technologies progress, the ongoing challenge is to ensure that greater autonomy does not undermine reliability or security.

Anthropic has not revealed the specific criteria employed by its safety layer to distinguish between safe and unsafe actions, which may be a crucial factor for developers assessing this feature.

The company announced that auto mode will be available to enterprise and API users in the near future. Currently, it is restricted to certain model versions and is advised for use in controlled or sandboxed settings to reduce potential risks during the testing phase.